AI is a great analyst – but with a memory frozen in time. It can connect facts, draw conclusions, and write like an expert. The problem is that its “world” ends at a certain point. For businesses, this means one thing: without access to up-to-date data, even the best model can lead to incorrect decisions. That is why the real value of AI today does not lie in the technology itself, but in how you connect it to reality.

1. What is knowledge cutoff and why does it exist

Knowledge cutoff is the boundary date after which a model does not have guaranteed (and often any) “built-in” knowledge, because it was not trained on newer data. Providers usually describe this explicitly: for example, in the documentation of models by OpenAI, cutoff dates are listed (for specific model variants), and product notes often mention a “newer knowledge cutoff” in subsequent generations. Why does this happen at all? In simple terms: training models is costly, multi-stage, and requires strict quality and safety controls; therefore, the knowledge embedded in the model’s parameters reflects the state of the world at a specific point in time, rather than its continuous changes. A model is first trained on a large dataset, and once deployed, it no longer learns on its own – it only uses what it has learned before. Research on retrieval has long highlighted this fundamental limitation: knowledge “embedded” in parameters is difficult to update and scale, which is why approaches were developed that combine parametric memory (the model) with non-parametric memory (document index / retriever). This concept is the foundation of solutions such as RAG and REALM. In practice, some providers introduce an additional distinction: besides “training data cutoff”, they also define a “reliable knowledge cutoff” (the period in which the model’s knowledge is most complete and trustworthy). This is important from a business perspective, as it shows that even if something existed in the training data, it does not necessarily mean it is equally stable or well “retained” in the model’s behavior.

2. How cutoff affects the reliability of business responses

The most important risk may seem trivial: the model may not know events that occurred after the cutoff, so when asked about the current state of the market or operational rules, it will “guess” or generalize. Providers explicitly recommend using tools such as web or file search to bridge the gap between training and the present.

In practice, three types of problems emerge:

The first is outdated information: the model may provide information that was correct in the past but is incorrect today. This is particularly critical in scenarios such as:

- customer support (changed warranty terms, new pricing, discontinued products),

- sales and procurement (prices, availability, exchange rates, import regulations),

- compliance and legal (regulatory changes, interpretations, deadlines),

- IT/operations (incidents, service status, software versions, security policies). The mere fact that models have formally defined cutoff dates in their documentation is a clear signal: without retrieval, you should not assume accuracy.

The second is hallucinations and overconfidence: LLMs can generate linguistically coherent responses that are factually incorrect – including “fabricated” details, citations, or names. This phenomenon is so common that extensive research and analyses exist, and providers publish dedicated materials explaining why models “make things up.”

The third is a system-level business error: the real cost is not that AI “wrote a poor sentence”, but that it fed an operational decision with outdated information. Implementation guidelines emphasize that quality should be measured through the lens of cost of failure (e.g., incorrect returns, wrong credit decisions, faulty commitments to customers), rather than the “niceness” of the response.

In practice, this means that in a business environment, model responses should be treated as:

- support for analysis and synthesis, when context is provided (RAG/API/web),

- a hypothesis to be verified, when the question involves dynamic facts.

3. Methods to overcome cutoff and access up-to-date knowledge at query time

Below are the technical and product approaches most commonly used in business implementations to “close the gap” created by knowledge cutoff. The key idea is simple: the model does not need to “know” everything in its parameters if it can retrieve the right context just before generating a response.

3.1 Real-time web search

This is the most intuitive approach: the LLM is given a “web search” tool and can retrieve fresh sources, then ground its response in search results (often with citations). In the documentation of several providers, this is explicitly described as operating beyond its knowledge cutoff.

For example:

- a web search tool in the API can enable responses with citations, and the model – depending on configuration – decides whether to search or answer directly,

- some platforms also return grounding metadata (queries, links, mapping of answer fragments to sources), which simplifies auditing and building UIs with references.

3.2 Connecting to APIs and external data sources

In business, the “source of truth” is often a system: ERP, CRM, PIM, pricing engines, logistics data, data warehouses, or external data providers. In such cases, instead of web search, it is better to use an API call (tool/function) that returns a “single version of truth”, while the model is responsible for:

- selecting the appropriate query,

- interpreting the result,

- presenting it to the user in a clear and understandable way. This pattern aligns with the concept of “tool use”: the model generates a response only after retrieving data through tools.

3.3 Retrieval-Augmented Generation (RAG)

RAG is an architecture in which a retrieval step (searching within a document corpus) is performed before generating a response, and the retrieved fragments are then added to the prompt. In the literature, this is described as combining parametric and non-parametric memory.

In business practice, RAG is most commonly used for:

- product instructions and operational procedures,

- internal policies (HR, IT, security),

- knowledge bases (help centers),

- technical documentation, contracts, and regulations,

- project repositories (notes, architectural decisions).

An important observation from implementation practices: RAG is particularly useful when the model lacks context, when its knowledge is outdated, or when proprietary (restricted) data is required.

3.4 Fine-tuning and “continuous learning”

Fine-tuning is useful, but it is not the most efficient way to incorporate fresh knowledge. In practice, fine-tuning is mainly used to:

- improve performance for a specific type of task,

- achieve a more consistent format or tone,

- or reach similar results at lower cost (fewer tokens / smaller model).

If the challenge is data freshness or business context, implementation guidelines more often point toward RAG and context optimization rather than “retraining the model”.

“Continuous learning” (online learning) in foundation models is rarely used in practice – instead, we typically see periodic releases of new model versions and the addition of retrieval/tooling as a layer that provides up-to-date information at query time. A good indicator of this is that model cards often describe models as static and trained offline, with updates delivered as “future versions”.

3.5 Hybrid systems

The most common “optimal” enterprise setup is a hybrid:

- RAG for internal company documents,

- APIs for transactional and reporting data,

- web search only in controlled scenarios (e.g., market analysis), with enforced attribution and source filtering.

Comparison of methods

| Method | Freshness | Cost | Implementation complexity | Risk | Scalability |

|---|---|---|---|---|---|

| RAG (internal documents) | high (as fresh as the index) | medium (indexing + storage + inference) | medium-high | medium (data quality, prompt injection in retrieval) | high |

| Live web search | very high | variable (tools + tokens + vendor dependency) | low-medium | high (web quality, manipulation, compliance) | high (but dependent on limits and costs) |

| API integrations (source systems) | very high (“single source of truth”) | medium (integration + maintenance) | medium | medium (integration errors, access, auditing) | very high |

| Fine-tuning | medium (depends on training data freshness) | medium-high | medium-high | medium (regressions, drift, version maintenance) | high (with mature MLOps processes) |

Behind this table are two important facts: (1) RAG and retrieval are consistently identified as key levers for improving accuracy when the issue is missing or outdated context, and (2) web search tools are often described as a way to access information beyond the knowledge cutoff, typically with citations.

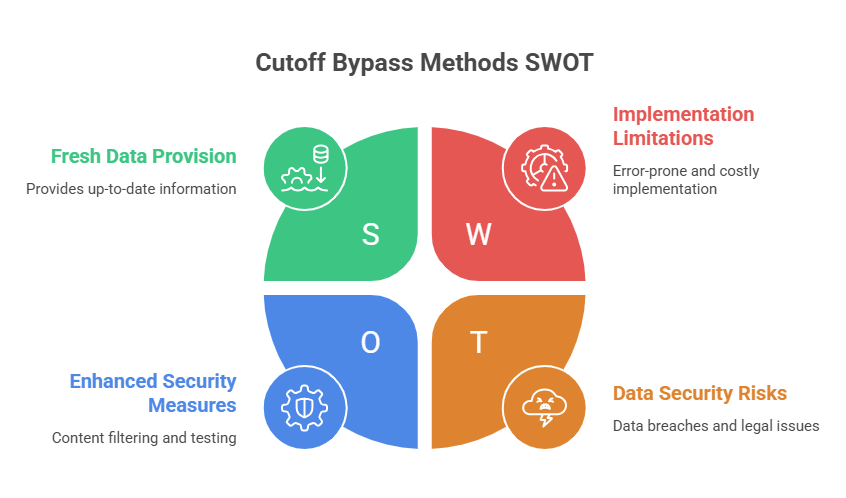

4. Limitations and risks of cutoff mitigation methods

The ability to “provide fresh data” does not mean the system suddenly becomes error-free. In business, what matters are the limitations that ultimately determine whether an implementation is safe and cost-effective.

4.1 Quality and “truthfulness” of sources

Web search and even RAG can introduce content into the context that is:

- incorrect, incomplete, or outdated,

- SEO spam or intentionally manipulative content,

- inconsistent across sources. This is why it is becoming standard practice to provide citations/sources and enforce source policies for sensitive domains (finance, law, healthcare).

4.2 Prompt injection

In systems with tools, the attack surface increases. The most common risk is prompt injection: a user (or content within a data source) attempts to force the model into performing unintended actions or bypassing rules.

Particularly dangerous in enterprise environments is indirect prompt injection: malicious instructions are embedded in data sources (e.g., documents, emails, web pages retrieved via RAG or search) and only later introduced into the prompt as “context”. This issue is already widely discussed in both academic research and security analyses. For businesses, this means adding additional layers: content filtering, scanning, clear rules on what tools are allowed to do, and red-team testing.

4.3 Privacy, data residency, and compliance boundaries

In practice, “freshness” often comes at the cost of data leaving the trusted boundary.

- In API environments, retention mechanisms and modes such as Zero Data Retention can be configured, but it is important to understand that some features (e.g., third-party tools, connectors) have their own retention policies.

- Some web search integrations (e.g., in specific cloud services) explicitly warn that data may leave compliance or geographic boundaries, and that additional data protection agreements may not fully cover such flows. This has direct legal and contractual implications, especially in the EU.

- Certain web search tools have variants that differ in their compatibility with “zero retention” (e.g., newer versions may require internal code execution to filter results, which changes privacy characteristics).

4.4 Latency and costs

Every additional step (web search, retrieval, API calls, reranking) introduces:

- higher latency,

- higher cost (tokens + tool / API call fees),

- greater maintenance complexity.

Model documentation clearly shows that search-type tools may be billed separately (“fee per tool call”), and web search in cloud services has its own pricing.

4.5 The risk of “good context, wrong interpretation”

Even with excellent retrieval, the model may:

- draw the wrong conclusion from the context,

- ignore a key passage,

- or “fill in” missing elements.

That is why mature implementations include validation and evaluation, not just “a connected index”.

5. Comparing competitor approaches

The comparison below is operational in nature: not who has the better benchmark, but how providers solve the problem of freshness and data integration.

The common denominator is that every major provider now recognizes that “knowledge in the parameters” alone is not enough and offers grounding / retrieval tools or search partnerships.

5.1 Comparison of vendors and update mechanisms

| Vendor | Model family (examples) | Update / grounding mechanisms | Real-time availability | Integrations (typical) |

|---|---|---|---|---|

| OpenAI | GPT | API tools: web search + file search (vector stores) during the conversation; periodic model / cutoff updates | yes (web search), depending on configuration | vector stores, tools, connectors / MCP servers (external) |

| Gemini / (historically: PaLM) | Grounding with Google Search; grounding metadata and citations returned | yes (Search) | Google ecosystem integrations (tools, URL context) | |

| Anthropic | Claude | Web search tool in the API with citations; tool versions differ in filtering and ZDR properties | yes (web search) | tools (tool use), API-based integrations |

| Microsoft | Copilot / models in Azure | Web search (preview) in Azure with grounding (Bing); retrieval and grounding in M365 data via semantic indexing / Graph | yes (web), yes (M365 retrieval) | M365 (SharePoint / OneDrive), semantic index, web grounding |

| Meta Platforms | Llama / Meta AI | For open-weight models: updates via new model releases; in products: search partnerships for real-time information | yes (in Meta AI via search partnerships) | open-source ecosystem + integrations in Meta apps |

At the source level, web search and file search are explicitly described as a “bridge” between cutoff and the present in APIs. Google documents Search grounding as real-time and beyond knowledge cutoff, with citations. Anthropic documents its web search tool and automatic citations, as well as ZDR nuances depending on the tool version. Microsoft describes web search (preview) with grounding and important legal implications of data flows; separately, it describes semantic indexing as grounding in organizational data. Meta explicitly states that its search partnerships provide real-time information in chats and also publishes cutoff dates in Llama model cards (e.g. Llama 3).

It is also worth noting that some vendors provide fairly precise cutoff dates for successive model versions (e.g. in product notes and model cards), which is a practical signal for business: “version your dependencies, measure regressions, and plan upgrades.”

6. Recommendations for companies and example use cases

This section is intentionally pragmatic. I do not know your specific parameters (industry, scale, budget, error tolerance, legal requirements, data geographies). For that reason, these recommendations are a decision-making template that should be tailored.

6.1 Reference architecture for business

A layered architecture tends to work best:

Data and source layer:

- “systems of truth” (ERP / CRM / BI) via API,

- unstructured knowledge (documents) via RAG,

- the external world (web) only where it makes sense and complies with policy.

Orchestration and policy layer:

- query classification: Is freshness needed? Is this a factual question? Is web access allowed?

- source policy: allowlist of domains / types, trust tiers, citation requirements,

- action policy: what the model is allowed to do (e.g. it cannot “on its own” send an email or change a record without approval).

Quality and audit layer:

- logs: question, tools used, sources, output,

- regression tests (sets of business questions),

- metrics: accuracy@k for retrieval, percentage of answers with citations, response time, cost per 1,000 queries,

- escalation to a human when the model has no sources or uncertainty is detected.

6.2 Verification processes, SLAs, and monitoring

Practices that make the difference:

- Define the SLA not as “the LLM is always right”, but in terms of response time, minimum citation level, maximum cost per query, and maximum incident rate (e.g. incorrect information in critical categories). The point of reference is the cost of failure described in quality optimization guidance.

- Introduce risk classes: “informational” vs “operational” (e.g. an automatic system change). For operational cases, apply approvals and limited agency (human-in-the-loop).

- For web search and external tools, verify the legal implications of data flows (geo boundary, DPA, retention).

If you operate in the EU and your use case may fall into regulated categories (e.g. decisions related to employment, credit, education, infrastructure), it is worth mapping requirements in terms of risk management systems and human oversight – this is the direction increasingly formalized by law and standards.

6.3 Short case studies

Customer service (contact center + knowledge base)

Goal: shorten response times and standardize communication.

Architecture: RAG on an up-to-date knowledge base + permissions to retrieve order statuses via API + no web search (to avoid conflicts with policy).

Risk: prompt injection through ticket / email content; in practice, you need filtering and a clear distinction between “content” and “instruction”.

Market analysis (research for sales / strategy)

Goal: quickly summarize trends and market signals.

Architecture: web search with citations + source policy (tier 1: official reports, regulators, data agencies; tier 2: industry media) + mandatory citations in the response.

Risk: low-quality or manipulated sources; this is why citations and source diversity are critical.

Compliance / internal policies

Goal: answer employees’ questions about what is allowed under current procedures.

Architecture: RAG only on approved document versions + versioning + source logging.

Risk: index freshness and access control; this fits well with solutions that keep data in place and respect permissions.

7. Summary and implementation checklist

Knowledge cutoff is not a “flaw” of any particular vendor – it is a feature of how large models are trained and released. Business reliability, therefore, does not come from searching for a “model without cutoff”, but from designing a system that delivers fresh context at query time and keeps risks under control.

7.1 Implementation checklist

-

- Identify categories of questions that require freshness (e.g. pricing, law, statuses) and those that can rely on static knowledge.

- Choose a freshness mechanism: API (system of record) / RAG (documents) / web search (market) – do not implement everything at once in the first iteration.

- Define a source policy and citation requirement (especially for market analysis and factual claims).

- Introduce safeguards against prompt injection (direct and indirect): content filtering, separation of instructions from data, red-team testing.

- Define retention, data residency, and rules for transferring data to external services (geo boundary / DPA / ZDR).

- Build an evaluation set (based on real-world cases), measure the cost of errors, and define escalation thresholds to a human.

- Plan versioning and updates: both for models (upgrades) and indexes (RAG refreshes).

8. AI without up-to-date data is a risk. How can you prevent it?

In practice, the biggest challenge today is not AI adoption itself, but ensuring that AI has access to current, reliable data. Real value – or real risk – emerges at the intersection of language models, source systems, and business processes. At TTMS, we help design and implement architectures that connect AI with real-time data – from system integrations and RAG solutions to quality control and security mechanisms. If you are wondering how to apply this approach in your organization, the best place to start is a conversation about your specific scenarios. Contact us!

FAQ

Can AI make business decisions without access to up-to-date data?

In theory, a language model can support decisions based on patterns and historical knowledge, but in practice this is risky. In many business processes, changing data is critical – prices, availability, regulations, or operational statuses. Without taking that into account, the model may generate recommendations that sound logical but are no longer valid. The problem is that such answers often sound highly credible, which makes errors harder to detect.

That is why, in business environments, AI should not be treated as an autonomous decision-maker, but as a component that supports a process and always has access to current data or is subject to control. In practice, this means integrating AI with source systems and introducing validation mechanisms. In many cases, companies also use a human-in-the-loop approach, where a person approves key decisions. This is especially important in areas such as finance, compliance, and operations.

How can you tell if AI in a company is working with outdated data?

The most common signal is subtle inconsistencies between AI responses and operational reality. For example, the model may provide outdated prices, incorrect procedures, or refer to policies that have already changed. The challenge is that isolated mistakes are often ignored until they begin to affect business outcomes.

A good approach is to introduce control tests – a set of questions that require up-to-date knowledge and quickly reveal the system’s limitations. It is also worth analyzing response logs and comparing them with system data. In more advanced implementations, companies use response-quality monitoring and alerts whenever potential inconsistencies are detected.

Another key question is whether the AI “knows that it does not know.” If the model does not signal that it lacks current data, the risk increases. That is why more and more organizations implement mechanisms that require the model to indicate the source of information or its level of confidence.

Does RAG solve all problems related to data freshness?

RAG significantly improves access to current information, but it is not a universal solution. Its effectiveness depends on the quality of the data, the way it is indexed, and the search mechanisms used. If documents are outdated, inconsistent, or poorly prepared, the system will still return inaccurate or misleading answers.

Another challenge is context. The model may receive correct data but still interpret it incorrectly or ignore a critical fragment. That is why RAG requires not only infrastructure, but also content governance and data-quality management. In practice, this means regularly updating indexes, controlling document versions, and testing outputs.

In many cases, RAG works best as part of a broader system that combines multiple data sources, such as documents, APIs, and operational data. Only this kind of setup makes it possible to achieve both high quality and strong reliability.

What are the biggest hidden costs of implementing AI with data access?

The most underestimated cost is usually integration. Connecting AI to systems such as ERP, CRM, or data warehouses requires architecture work, security safeguards, and often adjustments to existing processes. Another major cost is maintenance – updating data, monitoring response quality, and managing access rights.

Then there is the cost of errors. If an AI system makes the wrong decision or gives a customer incorrect information, the consequences may be far greater than the cost of the solution itself. That is why more companies are evaluating ROI not only in terms of automation, but also in terms of risk reduction.

It is also important to consider operational costs, such as latency and resource consumption when using external tools and APIs. In the end, the most cost-effective solutions are those designed properly from the start, not those that are simply “bolted on” to existing processes.

Can AI be implemented in a company without risking data security?

Yes, but it requires a deliberate architectural approach. The key issue is determining what data the model is allowed to process and where that data is physically stored. In many cases, organizations use solutions that do not move data outside the company’s trusted environment, but instead allow it to be searched securely in place.

Access-control mechanisms are also essential. AI should only be able to see the data that a given user is authorized to access. In more advanced systems, companies also apply anonymization, data masking, and full logging of all operations.

It is equally important to consider threats such as prompt injection, which may lead to unauthorized access to information. That is why AI implementation should be treated like any other critical system – with full attention to security policies, audits, and monitoring. With the right approach, AI can be not only secure, but can actually improve control over data and processes.