Digital process automation has transformed from a back-office efficiency tool into a strategic imperative that shapes how organizations compete and deliver value. Many companies still rely on processes spread across emails, spreadsheets, approval chains, and disconnected systems. What looks manageable on paper often creates delays, rework, inconsistent decisions, and unnecessary operating costs at scale.

This is why digital process automation has moved far beyond basic task automation. It helps organizations connect systems, standardize workflows, reduce manual effort, and make processes faster, more reliable, and easier to control. In practice, that means shorter cycle times, fewer errors, better compliance, and a smoother experience for both employees and customers.

In this article, we look at the real benefits of digital process automation, where it creates the most business value, and what organizations should consider before implementation.

1. What Digital Process Automation Means in 2026

Digital process automation (DPA) is the automation of end-to-end business processes across systems, data, and people. Instead of focusing on single tasks, it connects entire workflows – from data input and validation to decision-making and final output.

Traditional automation typically handles isolated activities, such as sending notifications or updating records. DPA goes further by coordinating multiple steps, systems, and stakeholders into one continuous process. This allows organizations to reduce manual handoffs, eliminate bottlenecks, and maintain consistency across operations.

In practice, DPA is used to automate processes such as customer onboarding, invoice processing, loan approvals, or internal approval workflows. For example, instead of manually reviewing documents, transferring data between systems, and sending emails, a DPA solution can validate input, route tasks automatically, trigger decisions based on rules or AI, and notify relevant stakeholders in real time.

What makes DPA particularly relevant today is the increasing complexity of business environments. Organizations operate across multiple systems and channels, while expectations for speed, accuracy, and compliance continue to grow. DPA addresses this by creating structured, scalable processes that can adapt to changing business needs without constant manual intervention.

2. Operational Benefits of Digital Process Automation

2.1 Improved Efficiency and Productivity

Digital process automation improves efficiency by eliminating repetitive manual tasks and reducing the need for constant human intervention across complex workflows.

In many organizations, employees spend a significant portion of their time on activities such as entering data into multiple systems, verifying information, forwarding requests, or following up on approvals. Industry research consistently shows that a large share of operational work – often estimated at 20-30% – is repetitive and can be automated.

By automating these steps, DPA ensures that processes move forward without unnecessary interruptions. Data can be captured once and reused across systems, tasks can be triggered instantly, and approvals can be routed automatically based on predefined rules. This significantly reduces process cycle times and minimizes idle waiting periods between steps.

In practice, organizations often report noticeable improvements in throughput and processing speed after implementing automation, especially in processes that previously relied on multiple manual handoffs.

As a result, teams can handle higher volumes of work with the same resources while focusing more on activities that require expertise, judgment, and direct interaction with customers or partners. Over time, this leads to measurable gains in productivity and a more efficient allocation of organizational capacity. Simplify and Automate: Power Apps Business Process Flow.

2.2 Error Reduction and Quality Improvement

Digital process automation significantly reduces the risk of errors by standardizing how processes are executed and limiting the reliance on manual input.

In many organizations, errors occur during repetitive activities such as data entry, document handling, or transferring information between systems. Even small inconsistencies at these stages can lead to incorrect decisions, delays, or the need for costly corrections later in the process. Industry studies suggest that manual data handling is one of the most common sources of operational errors, especially in processes involving multiple handoffs.

DPA addresses these issues by enforcing validation rules at every step of the workflow. Data can be checked automatically upon entry, required fields cannot be skipped, and processes follow predefined paths without relying on individual interpretation. This ensures that each case is handled in a consistent and controlled manner.

In addition, decision points can be supported by business rules or AI-based models, reducing variability and ensuring that similar inputs lead to consistent outcomes. This is particularly important in high-volume environments, where even a small error rate can scale into significant operational risk.

As a result, organizations benefit from higher data quality, fewer exceptions, and a substantial reduction in rework. Over time, this not only improves operational reliability but also contributes to better customer experience and stronger compliance with internal and regulatory requirements.

2.3 Enhanced Operational Visibility and Control

Digital process automation provides organizations with real-time visibility into how their processes operate, enabling better control over execution and performance.

In manual or fragmented environments, it is often difficult to determine the exact status of a process, identify where delays occur, or understand how long individual steps take. Information is typically spread across emails, spreadsheets, and multiple systems, making it challenging to build a complete and accurate picture of operations.

With DPA, every step of a process is tracked and recorded in a structured and centralized way. Organizations can monitor the progress of individual cases in real time, see which tasks are completed, which are pending, and where bottlenecks are forming. This level of transparency allows teams to react quickly to issues and prevent minor delays from escalating into larger operational problems.

In addition, process data can be analyzed to identify patterns, inefficiencies, and areas for optimization. Many organizations use this visibility to continuously improve workflows, reduce cycle times, and make more informed operational decisions based on actual performance data rather than assumptions.

Enhanced visibility also strengthens control and governance. Organizations can enforce process rules, maintain complete audit trails, and ensure that workflows are executed in line with internal policies and regulatory requirements. This is particularly important in industries where compliance, traceability, and accountability are critical.

2.4 Scalability Without Proportional Resource Increases

As organizations grow, manual processes often become a bottleneck that limits their ability to scale efficiently.

An increase in transaction volumes, customer requests, or internal operations typically leads to a proportional increase in workload. In traditional environments, this means hiring more staff, increasing operational costs, and adding complexity to coordination across teams. Over time, this approach becomes difficult to sustain and reduces overall agility.

Digital process automation changes this dynamic by allowing organizations to scale processes without a corresponding increase in resources. Once a workflow is automated, it can handle significantly higher volumes with minimal additional effort, as execution is driven by systems rather than manual input.

This is particularly valuable in scenarios such as rapid business growth, expansion into new markets, or seasonal spikes in demand. Instead of building larger teams to absorb increased workload, organizations can rely on automated processes to maintain performance and consistency.

Importantly, scalability through automation does not come at the expense of quality. Processes continue to follow the same rules, validation mechanisms, and decision logic, ensuring that outcomes remain consistent even as volume increases.

As a result, organizations can grow faster, respond more flexibly to changing demand, and maintain control over operational costs without overburdening their teams.

3. Financial Benefits of Process Automation

3.1 Cost Reduction Across Business Functions

Digital process automation reduces operational costs by eliminating manual work, minimizing errors, and improving resource utilization across business processes.

In traditional environments, a significant portion of operational costs is driven by repetitive administrative tasks, rework caused by errors, and time spent coordinating activities across teams. These inefficiencies are often difficult to measure directly but accumulate over time, creating a substantial financial burden.

By automating routine activities such as data entry, document processing, and approvals, organizations can reduce the need for manual labor in process execution. This allows teams to operate more efficiently without increasing headcount, while also lowering the cost associated with delays and process inconsistencies.

In addition, fewer errors mean fewer corrections, fewer escalations, and less time spent resolving issues. Over time, this translates into measurable cost savings and a more predictable cost structure across operations.

3.2 Faster Time-to-Value for New Initiatives

Market opportunities often have narrow windows, and organizations that cannot act quickly risk losing potential value.

In traditional environments, launching new processes or improving existing ones often requires extensive coordination between teams, system changes, and manual configuration. As a result, organizations may wait weeks or even months before seeing measurable outcomes from their initiatives.

Digital process automation significantly shortens the time required to deliver value from new initiatives by reducing the complexity of implementation and minimizing manual coordination.

With DPA, processes can be designed, configured, and deployed much faster, particularly when using low-code or configurable platforms. This allows organizations to move from idea to execution in a significantly shorter timeframe and start realizing value earlier.

In practice, organizations often report that implementation timelines can be reduced from months to weeks, while individual process steps that previously required hours or days can be completed in minutes once automated. These improvements are consistently observed across high-volume, process-driven environments.

Faster time-to-value not only improves the financial return on new initiatives but also enables organizations to respond more quickly to market changes, test new solutions, and scale successful processes without long implementation cycles.

3.3 Better Resource Allocation and Utilization

Organizations often struggle not with a lack of resources, but with how those resources are allocated and utilized across processes.

In many cases, skilled employees spend a significant portion of their time on repetitive, low-value tasks such as data entry, document verification, or coordinating routine activities between teams. This leads to underutilization of expertise and limits the organization’s ability to focus on more strategic work.

Digital process automation helps address this imbalance by shifting routine, rule-based activities from people to systems. Tasks that do not require human judgment can be executed automatically, allowing employees to focus on areas where their skills create the most value, such as problem-solving, decision-making, and customer interaction.

As a result, organizations can make better use of their existing workforce without the immediate need to increase headcount. Teams become more focused, workloads are distributed more effectively, and managers gain greater flexibility in assigning resources based on business priorities rather than operational constraints.

In addition, improved resource utilization supports better planning and capacity management. With more predictable and structured processes, organizations can more accurately estimate workload, allocate resources efficiently, and respond more effectively to changing demand.

4. Customer Experience and Service Benefits

4.1 Faster Response Times and Service Delivery

Customers increasingly expect fast and seamless service, and delays in processing requests can directly impact their perception of an organization.

In manual environments, response times are often affected by internal inefficiencies such as waiting for approvals, transferring information between systems, or relying on multiple teams to complete a single request. These delays can lead to frustration, especially when customers expect quick answers or immediate action.

Digital process automation significantly reduces response and processing times by eliminating unnecessary steps and enabling processes to move forward without manual intervention. Requests can be validated, routed, and processed automatically, ensuring that customers receive faster and more predictable service.

As a result, organizations are better equipped to meet rising customer expectations and deliver a more responsive service experience across channels.

4.2 Consistent, Reliable Customer Interactions

Consistency is a key factor in building trust with customers, yet it is difficult to achieve when processes rely heavily on manual execution and individual decision-making.

Inconsistent handling of similar cases, missing information, or variations in response quality can negatively affect the overall customer experience. These issues are particularly visible in high-volume environments, where even small inconsistencies can scale quickly.

Digital process automation helps standardize how requests are handled by enforcing predefined workflows, validation rules, and decision logic. This ensures that each customer interaction follows the same structure, regardless of who is involved in the process.

As a result, organizations can deliver more reliable and predictable service, reducing the risk of errors and improving the overall perception of quality.

4.3 Personalization at Scale

Modern customers expect businesses to understand their preferences, anticipate needs, and tailor interactions accordingly. DPA platforms combine automation with analytics to deliver personalized experiences across large customer populations. Systems track customer behaviors, preferences, and history to inform automated interactions. Machine learning algorithms identify patterns that indicate customer needs or preferences. Automated workflows adapt communications, recommendations, and service approaches based on individual profiles.

5. Strategic and Competitive Advantages

5.1 Improved Compliance and Risk Management

Faster and more consistent processes have a direct impact on customer satisfaction and long-term relationships. When customers receive timely responses, accurate information, and a smooth experience across interactions, they are more likely to trust the organization and continue using its services. Conversely, delays, errors, or repeated requests for the same information can quickly erode satisfaction and lead to customer churn.

By improving both speed and consistency, digital process automation creates a more seamless and frictionless customer journey. Customers spend less time waiting, repeating actions, or clarifying issues, which leads to a more positive overall experience.

Over time, this translates into higher customer retention, stronger relationships, and increased lifetime value, making customer experience improvements a key driver of business success.

5.2 Data-Driven Decision Making Capabilities

Effective decision-making depends on access to accurate, timely, and consistent data, yet many organizations still rely on fragmented information spread across multiple systems.

In traditional environments, data is often incomplete, outdated, or difficult to consolidate, especially when processes involve manual steps and multiple handoffs. As a result, decisions are frequently based on assumptions, partial visibility, or delayed reporting.

Digital process automation addresses this challenge by capturing and structuring data at every stage of a process. Each action, decision point, and outcome is recorded in a consistent way, creating a reliable source of operational data that can be analyzed in real time.

This enables organizations to gain deeper insight into process performance, identify trends, and detect inefficiencies that would otherwise remain hidden. Organizations that effectively leverage data and advanced technologies often achieve significantly higher returns on their digital investments, as highlighted in industry research.

In addition, structured process data can support more advanced capabilities such as predictive analysis, performance optimization, and continuous improvement initiatives. Over time, this shifts organizations from reactive decision-making to a more proactive and data-driven approach.

5.3 Agility to Adapt to Market Changes

Market conditions shift rapidly. Customer preferences evolve, competitors launch new offerings, regulations change, and economic factors create new constraints or opportunities. Automated processes provide flexibility that manual operations cannot match. Digital workflows can be modified and redeployed rapidly compared to retraining staff or reorganizing departments.

This agility creates strategic options. Organizations can experiment with new business models, test market approaches, or enter new segments without massive upfront investments. The ability to pivot quickly reduces risks associated with strategic initiatives while increasing potential rewards.

5.4 Employee Satisfaction and Retention

Talent acquisition and retention challenge organizations across industries. Benefits of automating business processes include significant improvements in employee satisfaction. Professionals freed from tedious, repetitive tasks engage in work that utilizes their skills and education. Creative problem-solving, strategic thinking, and relationship building provide more fulfilling experiences than data entry or manual processing.

Retained employees accumulate valuable organizational knowledge and build stronger customer relationships. Reduced turnover cuts recruitment and training costs while maintaining service quality. Satisfied employees become advocates who attract additional talent through referrals and positive employer branding.

6. Understanding Implementation Realities. Common Challenges and How to Overcome Them

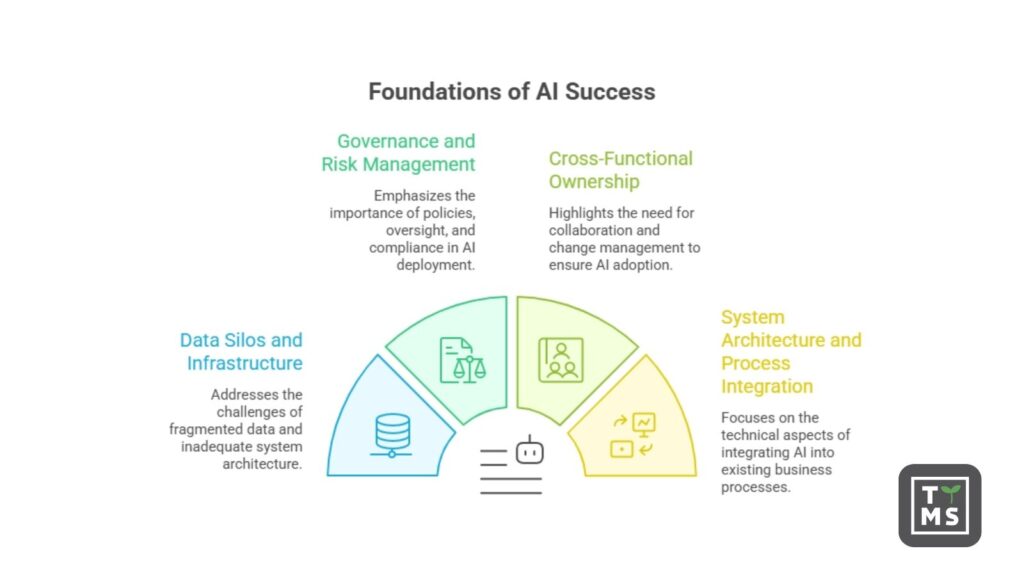

While the benefits of digital process automation are substantial, successful implementation requires a strategic approach. Industry research shows that many digital transformation initiatives fail to meet their objectives, often because of preventable issues rather than limitations of the technology itself.

One of the most significant barriers is user adoption. Employees often revert to legacy ways of working when new automation tools are introduced without sufficient support, training, or communication. Research highlighted by Whatfix points out that poor adoption remains one of the most common reasons digital transformation efforts underperform. The most successful implementations treat change management as a core part of the initiative, investing in culture, continuous enablement, and clear communication about how automation supports employees rather than threatens their roles.

Integration complexity creates another common pitfall. Modern organizations typically operate across hundreds of applications, many of which remain disconnected, creating silos that limit the value of automation. As noted in MuleSoft research, organizations manage large application landscapes while only a relatively small share of systems are fully integrated. This makes seamless process orchestration more difficult and increases the risk of fragmented automation initiatives. To overcome this, organizations need strong data foundations, clear integration architecture, and early attention to connectivity between systems.

Implementation challenges also increase when automation is layered onto inefficient or poorly designed workflows. Automating broken processes does not solve underlying issues – it simply accelerates them. Organizations that achieve the strongest outcomes typically reengineer workflows before automation begins, define clear and measurable objectives, and monitor adoption and performance continuously rather than treating deployment as the finish line.

Strong data quality and system readiness also play a critical role in long-term success. Research discussed by Deloitte suggests that organizations with better data foundations and more mature technology environments are significantly more likely to realize value from AI and automation investments. Addressing data quality, governance, and process consistency early improves the likelihood that automation initiatives will deliver measurable and sustainable business results.

7. How Digital Process Automation Tools Deliver These Benefits

7.1 Key Capabilities of DPA Platforms

Modern DPA solutions provide comprehensive capabilities that enable end-to-end process automation. Workflow engines orchestrate sequences spanning multiple systems, departments, and decision points. Integration frameworks connect disparate applications, allowing data to flow seamlessly across technology landscapes. Process mining tools analyze existing operations to identify automation opportunities and measure improvements.

Artificial intelligence and machine learning capabilities extend automation beyond simple rules-based processing. Natural language processing enables systems to understand unstructured communications. Computer vision extracts information from documents and images. Predictive analytics anticipate outcomes and recommend optimal actions.

7.2 Integration with Existing Systems

Organizations have invested significantly in enterprise applications, databases, and custom systems that support critical operations. Effective automation must work within these existing technology environments rather than requiring wholesale replacement. Modern DPA platforms excel at connecting with established infrastructure through API-based integration with cloud applications, middleware capabilities for legacy systems, and data transformation tools that reconcile different formats and standards.

7.3 Low-Code and No-Code Functionality

Traditional software development creates bottlenecks that slow automation initiatives. Low-code and no-code platforms democratize automation by enabling business users to configure processes without extensive programming knowledge. Visual development environments replace coding with graphical configuration, while pre-built templates and components accelerate implementation.

This accessibility transforms how organizations approach process improvement. Business teams can automate departmental processes without competing for IT resources. Faster implementation cycles enable experimentation and iteration. Broader participation in automation initiatives surfaces more improvement opportunities and builds organizational capabilities.

8. Choosing the Right Digital Process Automation Software. Essential Features to Evaluate

Selecting digital process automation software requires more than comparing feature lists. The right platform should address current operational needs while also providing the flexibility to support future growth, process changes, and evolving business requirements.

Scalability is one of the most important factors to assess. A solution that works well for a limited number of users or workflows may quickly become a constraint as volumes increase, new teams adopt the platform, or business processes become more complex. Organizations should evaluate whether the software can support growth without performance degradation, excessive reconfiguration, or major architectural changes.

Integration flexibility is equally critical. DPA software should connect smoothly with existing systems, data sources, and third-party applications in order to support end-to-end workflows. Without strong integration capabilities, automation efforts can remain isolated and fail to deliver meaningful business value. Compatibility with APIs, legacy systems, and future applications should therefore be a central part of the evaluation process.

User experience also has a direct impact on implementation success. Intuitive interfaces reduce training requirements, accelerate adoption, and shorten time-to-value for both technical and non-technical users. When workflows are easy to understand, configure, and manage, organizations are more likely to achieve consistent use across teams and sustain automation efforts over time.

Analytics and reporting capabilities provide the visibility needed to monitor, manage, and improve automated processes. Real-time dashboards help teams track performance, identify bottlenecks, and respond quickly to operational issues, while historical reporting reveals trends, recurring inefficiencies, and opportunities for optimization. Without this level of visibility, it becomes difficult to measure the true impact of automation or support continuous improvement.

Security and governance should be evaluated with equal care, particularly in environments that involve sensitive data, regulatory requirements, or multiple user roles. Features such as role-based access control, audit trails, approval controls, and data encryption help protect information and ensure that automated workflows remain secure, compliant, and accountable.

Beyond technical capabilities, organizations should also assess the vendor’s implementation approach and long-term support. Onboarding, training, documentation, and ongoing maintenance all influence how quickly value is realized and how effectively the solution performs over time. Pricing should also be reviewed in the context of the organization’s budget, expected usage, and growth plans, ensuring that the platform remains sustainable as adoption increases.

Ultimately, the best DPA software is not the platform with the longest feature list, but the one that best fits the organization’s process maturity, technology landscape, and long-term business goals.

9. How TTMS Can Help You with Digital Process Automation

TTMS brings specialized expertise in implementing digital process automation solutions that deliver measurable business results across financial services, healthcare, manufacturing, and other sectors. As certified partners of leading technology platforms including AEM, Salesforce, and Microsoft, TTMS combines deep technical knowledge with practical understanding of business processes refined through numerous successful implementations.

The company’s approach addresses the critical success factors that prevent the common failure patterns plaguing automation initiatives. Beginning with thorough process analysis, TTMS evaluates existing workflows, system landscapes, and organizational capabilities to identify automation opportunities generating maximum value. This assessment ensures initiatives focus on processes where benefits justify investment while avoiding the trap of automating broken workflows that amplify existing inefficiencies.

Implementation services span the complete automation lifecycle with particular strength in complex integrations that many organizations find challenging. TTMS configures and integrates DPA platforms with existing enterprise systems, leveraging expertise in Microsoft Azure, Power Apps, and other low-code solutions. Whether connecting legacy systems with modern cloud applications or orchestrating workflows spanning multiple platforms, the company delivers reliable solutions that work within existing technology investments, helping organizations avoid expensive system replacements.

Managed services support ensures ongoing optimization and adaptation as business needs evolve. TTMS’s long-term client relationships and managed services models enable the company to serve as a strategic partner throughout digital transformation journeys rather than simply a project vendor. This continuous engagement addresses the reality that process automation represents a journey rather than a destination, with technologies evolving and new opportunities emerging continuously.

The company’s Business Intelligence expertise with tools like Power BI creates comprehensive analytics capabilities that maximize the benefits of process automation. Real-time visibility into process performance, combined with predictive analytics, enables clients to identify improvement opportunities proactively and measure automation value continuously.

Recognition including Forbes Diamonds awards and ISO certifications reflects TTMS’s track record of successful implementations. Organizations exploring why they should automate their business processes benefit from TTMS’s consultative approach that evaluates process automation benefits specific to industry contexts, competitive positions, and strategic objectives. This perspective ensures automation initiatives align with broader business goals while delivering tangible operational improvements that clients can measure and expand over time.

Interested in Digital Process Automation? Get in touch with us!

What is digital process automation?

Digital process automation (DPA) is the automation of end-to-end business processes across systems, data, and people. Instead of focusing on single tasks, DPA connects entire workflows to make them faster, more consistent, and easier to manage at scale.

How is digital process automation different from traditional automation?

Traditional automation usually handles isolated tasks, such as sending notifications or updating records. Digital process automation goes further by coordinating complete workflows across departments and systems, including approvals, validations, exception handling, and reporting.

What are the main benefits of digital process automation?

The main benefits of digital process automation include improved efficiency, fewer manual errors, better operational visibility, faster response times, stronger compliance, lower operating costs, and better use of employee time. It also helps organizations scale processes without increasing resources at the same pace.

Which business processes should be automated first?

The best starting points are high-volume, repetitive, rules-based processes that involve multiple handoffs or frequent delays. Common examples include customer onboarding, invoice processing, approvals, internal service requests, document workflows, and compliance-related processes.

How does digital process automation improve customer experience?

DPA improves customer experience by reducing response times, standardizing service delivery, and minimizing errors. Customers benefit from faster processing, more consistent interactions, and smoother journeys across channels, especially in processes that previously relied on manual steps.

Can digital process automation work with existing and legacy systems?

Yes, modern DPA platforms are designed to integrate with existing business systems, including legacy applications. Strong integration capabilities, APIs, middleware, and data transformation tools allow organizations to automate processes without replacing their entire technology stack.

How long does it take to see ROI from digital process automation?

The time to ROI depends on the complexity of the process, the quality of integration, and user adoption. In many cases, organizations begin to see value within months, especially when they automate high-volume workflows with clear inefficiencies and measurable business impact.

What are the most common challenges in DPA implementation?

The most common challenges include automating poorly designed processes, integration complexity, weak data quality, and low user adoption. Successful implementations usually combine process redesign, strong change management, early user involvement, and continuous performance monitoring.

What should organizations look for in digital process automation software?

Organizations should evaluate scalability, integration flexibility, user experience, analytics and reporting, security, governance, and vendor support. The best DPA software is not simply the platform with the most features, but the one that best fits the organization’s processes, systems, and long-term business goals.

Read more