Shadow AI refers to employees using generative AI tools and “AI features” without formal approval or oversight. It has become a board – level exposure rather than just an IT annoyance. Gartner’s 2025 survey of cybersecurity leaders found that 69% of organizations suspect or have evidence that staff are using prohibited public GenAI, and Gartner forecasts that by 2030 more than 40% of enterprises will experience security or compliance incidents linked to unauthorized Shadow AI.

What makes Shadow AI uniquely dangerous (compared to classic shadow IT) is that it blends data handling with automated reasoning: sensitive inputs can leak (privacy, trade secrets, regulated data), outputs can be trusted too quickly (“machine trust”), and agentic or semi – autonomous use can amplify errors or exploitation at scale.

Against this backdrop, ISO/IEC 42001 – the first international management system standard dedicated to AI – has become a practical way to operationalize AI governance: build an AI Management System (AIMS), create visibility, assign accountability, manage risk across the AI lifecycle, and continuously improve controls.

1. Why Shadow AI is now a board – level exposure

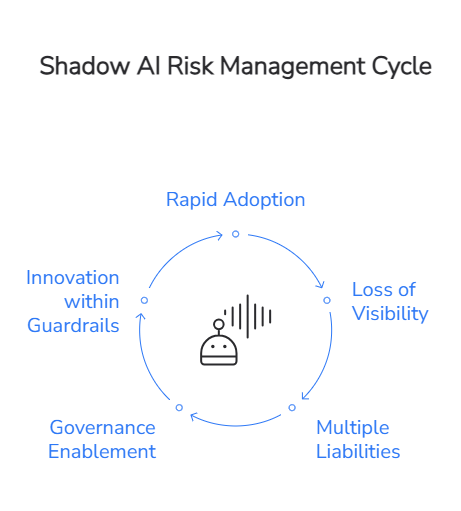

Shadow AI spreads for the same reason shadow IT did: it’s fast, convenient, and often feels “cheaper” than waiting for procurement, security review, and architecture approval. But generative AI adoption has accelerated this dynamic. Early adoption often occurred outside corporate IT, leaving CIOs and CISOs struggling to regain visibility and control over tools that are already embedded in daily operations.

The business risk profile is broader than “data leakage.” In practice, Shadow AI can create multiple simultaneous liabilities:

- Confidentiality and IP loss when employees paste regulated or proprietary information into tools outside organizational visibility.

- Security exposure (including new “attack surfaces”) when AI tools interact with identities, APIs, and internal infrastructure in ways existing controls do not anticipate.

- Decision risk when AI outputs influence customer, legal, HR, or financial actions without adequate human oversight, testing, or traceability.

A key leadership challenge is that “banning AI” rarely works in practice; it tends to drive usage further underground. Modern guidance increasingly points toward governed enablement: approved tools, clear policies, audits, monitoring, and user education – so employees can innovate inside guardrails rather than outside them.

2. What ISO/IEC 42001 adds that most AI programs are missing

ISO/IEC 42001 is an international standard that specifies requirements for establishing, implementing, maintaining, and continually improving an Artificial Intelligence Management System (AIMS) within an organization – whether you build AI, deploy AI, or both.

Two practical points matter for executive sponsors and procurement leaders:

- First, ISO/IEC 42001 is a management system approach – comparable in structure and intent to other ISO management standards – so it is designed to be used alongside existing governance foundations like ISO/IEC 27001 (information security) and ISO/IEC 27701 (privacy).

- Second, the standard is not just a “policy exercise.” Practitioner guidance emphasizes that certification involves meeting a structured set of controls/objectives (often summarized as 38 controls across 9 control objectives) spanning areas such as risk and impact assessment, AI lifecycle management, and data governance.

For Shadow AI specifically, ISO/IEC 42001 shifts an organization from “reacting to AI usage” to running AI as a governed capability: defining scope, establishing accountability, managing risks, monitoring performance, and improving controls continuously – so that unknown AI use becomes a governance failure to detect and correct, not an invisible norm.

3. How ISO 42001 turns Shadow AI into governed AI

Shadow AI thrives where organizations lack four basics: visibility, risk discipline, lifecycle control, and oversight. ISO/IEC 42001 is valuable because it forces these to become repeatable operational processes rather than ad hoc interventions.

Visibility becomes an explicit deliverable. In practice, AI governance starts with a clear inventory of where AI is used, what data it touches, and what decisions it influences. TTMS’ own guidance on certifications and governance frames AI governance exactly this way – inventory first, then controls, then auditability.

A concrete pattern emerging among early ISO/IEC 42001 adopters is formal registries of AI assets and models. For example, CM.com describes establishing an “AI Artifact Resource Registry” documenting its AI models as part of its ISO 42001 program – illustrating the operational expectation that AI use is tracked and managed, not guessed.

Risk management stops being optional. Gartner’s recommended response to Shadow AI includes enterprise – wide AI usage policies, regular audits for Shadow AI activity, and incorporating GenAI risk evaluation into SaaS assessments – measures that align with the management – system logic of ISO/IEC 42001 (policy → implementation → audit → improvement).

Lifecycle control replaces “tool sprawl.” A consistent theme in ISO/IEC 42001 interpretations is lifecycle discipline – from design and development through validation, deployment, monitoring, and retirement – so that AI components are governed like other critical systems, with evidence and accountability across changes.

Human oversight becomes a defined operating model. One of the most damaging Shadow AI patterns is “silent delegation”: employees rely on AI output without defined review thresholds or escalation paths. Modern governance frameworks stress that responsible AI use depends on roles, competence, training, and authority – so oversight is real, not nominal.

The practical executive takeaway is straightforward: if your organization can’t confidently answer “where AI is used, by whom, on what data, and under what controls,” you are already in Shadow AI territory – and ISO/IEC 42001 is one of the clearest operational frameworks available to fix that.

4. EU AI Act pressure: Shadow AI becomes a compliance and liability problem

The EU AI Act is rolling out in phases. The AI Act Service Desk summarizes a progressive timeline with a “full roll – out by 2 August 2027,” including: AI literacy provisions applicable from 2 February 2025; governance and general – purpose AI (GPAI) obligations applicable from 2 August 2025; and Annex III high – risk obligations (plus key transparency requirements) applying from 2 August 2026.

For executive teams, two issues make Shadow AI particularly risky under the AI Act:

- If Shadow AI touches a high – risk use case, you may become a “deployer” with concrete obligations – without knowing it. The AI Act Service Desk’s summary of Article 26 highlights deployer duties including using systems according to instructions, assigning competent human oversight, monitoring operation, managing input data, keeping logs (at least six months), reporting risks/incidents to providers/authorities, and notifying workers/representatives when used in the workplace.

- The cost of getting it wrong is designed to be “dissuasive.” The European Commission’s communications on the AI Act describe top – tier fines reaching up to €35 million or 7% of global annual turnover (whichever is higher) for the most serious infringements, with lower but still significant fine tiers for other violations.

It is also important – especially for 2026 planning – to acknowledge regulatory uncertainty around timelines. On 19 November 2025, the European Commission proposed targeted amendments (“Digital Omnibus on AI”) intended to smooth implementation. The European Parliament’s Legislative Train summary explains that the proposal would link high – risk applicability to the availability of harmonized standards/support tools (with an outer limit of 2 December 2027 for Annex III high – risk systems and 2 August 2028 for Annex I).

In parallel, the EDPB and EDPS Joint Opinion discusses the same proposal and explicitly describes moving key high – risk start dates and extending certain “grandfathering” cut – off dates (e.g., from 2 August 2026 to 2 December 2027 in the proposal’s logic).

Regardless of exact deadlines, the direction is stable: Europe is formalizing expectations around AI risk management, transparency, documentation, and oversight – precisely the areas where Shadow AI is weakest. TTMS’ analysis of the EU AI Act implementation highlights key milestones (including the GPAI Code of Practice and staged deadlines through 2027) and frames compliance as a leadership and reputation issue, not only a legal one.

The European Commission describes the General – Purpose AI Code of Practice (published July 10, 2025) as a voluntary tool to help providers meet AI Act obligations on transparency, copyright, and safety/security.

5. Why TTMS is positioned to lead on AI governance

TTMS treats AI governance as an operational discipline rather than a marketing claim. It is embedded in how AI solutions are designed, delivered, and monitored.

In February 2026, TTMS became the first Polish company to receive ISO/IEC 42001 certification for an Artificial Intelligence Management System (AIMS), following an independent audit conducted by TÜV Nord Poland. This certification confirms that AI – related projects delivered by TTMS operate within a structured governance framework covering risk assessment, lifecycle control, accountability, and continuous improvement.

For clients, this translates into measurable risk reduction. AI solutions are developed and deployed under defined oversight mechanisms, documented processes, and auditable controls. In the context of the EU AI Act and increasing regulatory scrutiny, this provides decision – makers with greater confidence that AI initiatives will not evolve into unmanaged compliance exposure.

From a procurement perspective, ISO/IEC 42001 certification also reduces due diligence complexity. Enterprise and regulated buyers increasingly use formal certifications as pre – selection criteria. Working with a partner that already operates under an accredited AI management system lowers audit burden, shortens vendor evaluation cycles, and aligns AI delivery with existing governance and compliance frameworks.

6. Build governed AI with TTMS

If you are responsible for AI investments, Shadow AI is the clearest warning sign that you need an AI governance operating model – not just new tools. ISO/IEC 42001 provides a structured, auditable way to build that operating model, while the EU AI Act increasingly raises the cost of undocumented, uncontrolled AI usage.

For decision – makers who want to move fast without drifting into Shadow AI, TTMS has published practical, business – facing resources on what the EU AI Act means and how implementation is evolving, including TTMS’ EU AI Act overview and the 2025 update on code of practice, enforcement, and timelines.

For procurement teams evaluating partners, TTMS also outlines the certifications that increasingly define “enterprise – ready” delivery capability (including ISO/IEC 42001).

Below is TTMS’ AI product portfolio – each designed to address real business needs while fitting into a governance – first approach:

- AI4Legal – AI solutions for law firms that automate work such as analyzing court documents, generating contracts from templates, and processing transcripts to improve speed and reduce errors.

- AI4Content (AI Document Analysis Tool) – Secure, customizable document analysis that generates structured summaries/reports, with options for local or customer – controlled cloud processing and RAG – based accuracy improvements.

- AI4E – learning – An AI – powered authoring platform that turns internal materials into professional training content and exports ready – to – use SCORM packages for LMS deployment.

- AI4Knowledge – A knowledge management platform that becomes a central hub for procedures and guidelines, enabling employees to ask questions and retrieve answers aligned with company standards.

- AI4Localisation – An AI translation platform tailored to industry context and communication style, supporting consistent terminology and customizable tone across content.

- AML Track – AML compliance and screening software that automates customer verification against sanction lists, generates reports, and supports audit trails for AML/CTF processes.

- AI4Hire – AI – driven resume/CV screening and resource allocation support, designed to analyze CVs deeply (beyond keyword matching) and provide evidence – based recommendations.

- QATANA – An AI – powered test management tool that streamlines the test lifecycle with AI – assisted test case creation and secure on‑premise deployment options.

FAQ

What is Shadow AI and why is it a serious enterprise risk?

Shadow AI refers to the use of generative AI tools, embedded AI features in SaaS platforms, or autonomous AI agents without formal approval, documentation, or oversight. For enterprises, this creates significant security and compliance exposure. Sensitive data may be entered into uncontrolled systems, intellectual property can be leaked, and AI-generated outputs may influence strategic, financial, HR, or legal decisions without validation. In regulated environments, uncontrolled AI usage can also trigger obligations under the EU AI Act. As AI becomes embedded in daily workflows, Shadow AI evolves from an IT visibility issue into a board-level risk management concern.

How does ISO/IEC 42001 help organizations control Shadow AI?

ISO/IEC 42001 establishes a formal Artificial Intelligence Management System (AIMS) that enables organizations to identify, document, assess, and monitor AI usage across the enterprise. Through structured AI risk management, lifecycle controls, accountability mechanisms, and defined human oversight processes, ISO 42001 certification helps eliminate uncontrolled AI deployments. Instead of reacting to unauthorized usage, companies implement a proactive AI governance framework that ensures transparency, traceability, and auditability. This structured approach significantly reduces the likelihood that Shadow AI will lead to security incidents, compliance failures, or regulatory penalties.

How is ISO/IEC 42001 connected to the EU AI Act?

Although ISO/IEC 42001 is a voluntary international standard and the EU AI Act is a binding regulation, the two frameworks are strongly aligned in practice. The AI Act introduces obligations for providers and deployers of high-risk AI systems, including documentation requirements, risk management procedures, monitoring obligations, and human oversight mechanisms. An AI Management System aligned with ISO 42001 supports these requirements by embedding governance discipline into everyday AI operations. Organizations that implement ISO/IEC 42001 are therefore better positioned to demonstrate AI Act compliance readiness, especially in areas related to AI risk control, transparency, and accountability.

Why does ISO 42001 certification matter in procurement and vendor selection?

For enterprise buyers and regulated organizations, ISO 42001 certification serves as independent confirmation that an AI provider operates within a formal AI governance and risk management framework. It indicates that AI solutions are developed, deployed, and maintained under documented controls covering lifecycle management, accountability, and continuous improvement. In many industries, certifications are increasingly used as pre-selection criteria during procurement processes. Choosing a partner with ISO/IEC 42001 certification reduces due diligence complexity, shortens vendor evaluation cycles, and lowers compliance and operational risk for decision-makers.

How can organizations scale AI innovation while ensuring AI Act compliance?

Scaling AI responsibly requires balancing innovation with governance discipline. Organizations should begin by mapping existing AI usage, identifying potential high-risk AI systems under the EU AI Act, and implementing structured AI risk management processes. Clear internal policies, defined oversight roles, data governance controls, and incident reporting procedures are essential. Establishing an AI Management System aligned with ISO/IEC 42001 provides a scalable foundation that supports both regulatory readiness and long-term AI innovation. Rather than slowing transformation, structured AI governance enables organizations to deploy AI solutions confidently while minimizing legal, financial, and reputational risk.